INSERT INTO TABLE tablename [PARTITION (partcol1[=val1], partcol2[=val2] ...)] VALUES values_row [, values_row ...]

UPDATE tablename SET column = value [, column = value ...] [WHERE expression]

DELETE FROM tablename [WHERE expression]

|

Hive 0.14 allow CRUD (Create -Read-Update -Delete )operation.

INSERT INTO TABLE tablename [PARTITION (partcol1[=val1], partcol2[=val2] ...)] VALUES values_row [, values_row ...] UPDATE tablename SET column = value [, column = value ...] [WHERE expression] DELETE FROM tablename [WHERE expression]

0 Comments

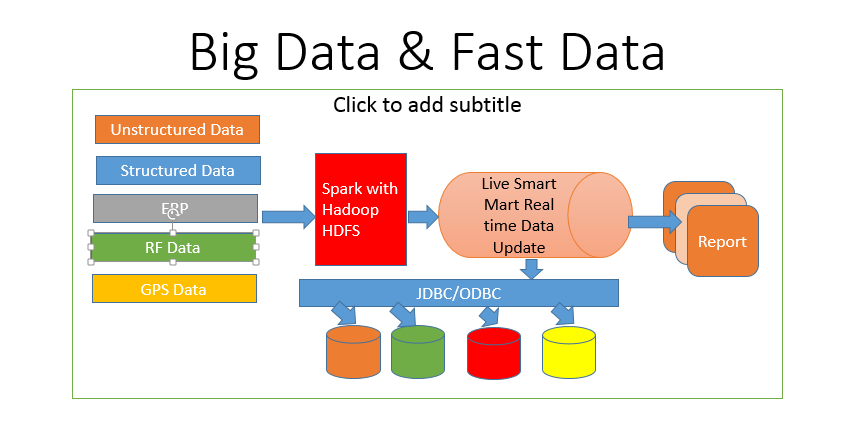

Hadoop could be used as a Real time RDBMS : Yes the solution is available now with Splice Machine . What it does :

Images : Splicemachine.com

Splice Machine’s high-performance, distributed computing architecture delivers massive parallelization for predicates, joins, aggregations, and functions by pushing computation down to each distributed data shard.  Big Data majorly based on :Volume , Velocity and Variety Volume :High Volume of data Gb to Pb Velocity : Real Time or Near Realtime Variety : Structure and Unstructured (Twitter, Facebook etc) Fast Data : Real time and Near Real time updates can be possible with Spark with HDFS ,Cloud S3 or Cassandra as a storage Process as shown above in the image : We can use Spark for the following domains :

|

AUTHORAjit Dash 24+ Years’ experience in Data Analytics, Data Sc, Data Bases, Data warehouse,Business Analytics, Business Intelligence, Bigdata and Data Sc. etc..

Archives

December 2023

Categories |