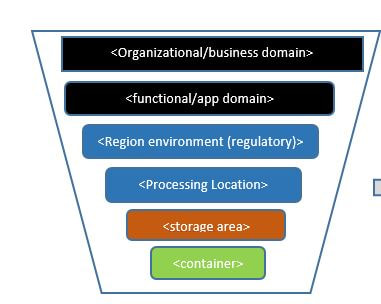

- Types of data :Information about types of data , frequency (Batch, real-time or near real-time mode)

- Usage : What the data can be used for

- End User :Who would be using and purpose

- Long Term and Short Term usage :How long the data need to be used for

- Maximum usability : Is there a limit of the data

- Expiry date : Is there a time line where the data expiry

- Base on the above points separate the data.

For the frequently use data keep in the faster access storage or db

Less frequently access or unused data store the different storage place

Take a current snap shot of the data or back up before purge the data from the fast access DB.

|

For best data insight and data visibility it is important to seperate source data based on following factors:

0 Comments

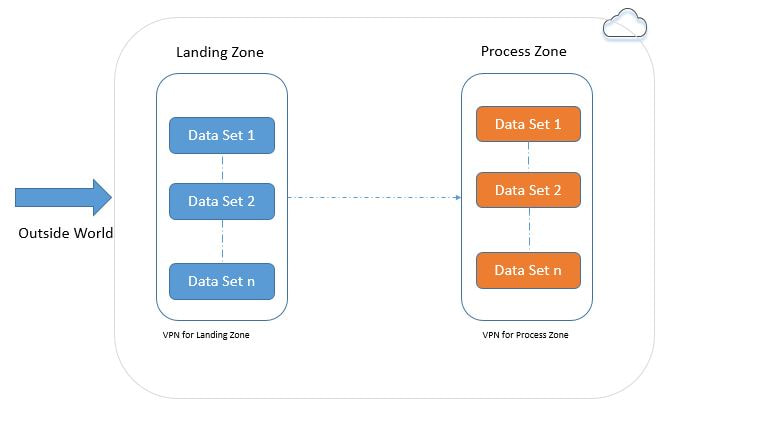

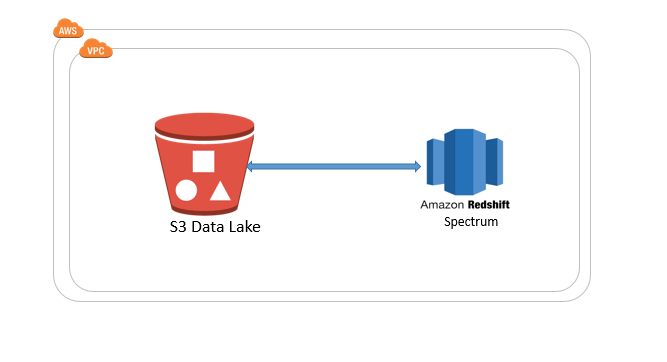

Seperate the Data Landing Zone and Data Process Zone by using seperate VPN .This will reduce the external world security threats. Also Landing zone could be use for the Data upload as well as Data Download zone. Data upload : Where customer can upload their data Data download: Where customer download the processed data  Redshift Spectrum

Advantages

HIPPA Requiremnts :

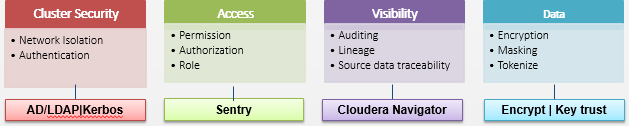

Cloudera : Cloudera Navigator and Key trust provides the HIPPA requiremnts Navigator Encrypt is an integrated part of the Cloudera and this provides a transparent security layer TED for any Linux applications without changing the data or application Encryption uses standard FIPS 140-2 & NIST for valid solution and these are follows the AES standards & Keys are strongly protected with several layers cryptography and stored separate from encrypted files. This providespProcess based access control for encrypted files allows only authorize system access using decrypt key in case of hack or physical compromised access to encrypted files can be avoided by access control. Key Trust is an universal key manger used with Navigator Encrypt to manages all cryptographic keys, certificates, configuration files, and any other “opaque object” to secure its most sensitive data & secured layer on the top of existing security for authorize access to the data in cloud Inbuilt security access features for Hadoop cluster through Kerbos & Sentry provides role based permission and security access for data in and out of Hadoop Cloudera Navigator Lineage and Audit feature provides data traceability is a requirement for HIPAA unauthorized visibility control through data tokenization, masking and encryption Source :Cloudera.com As per my previous post AWS Lambda price accordingly the memory usage here are the sample calculations (Please refer the pricing table below)

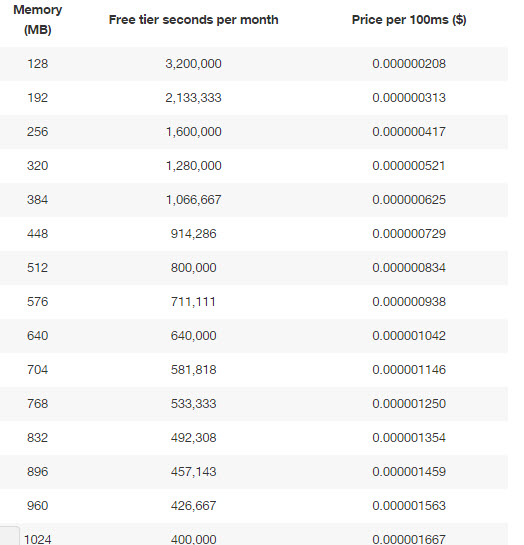

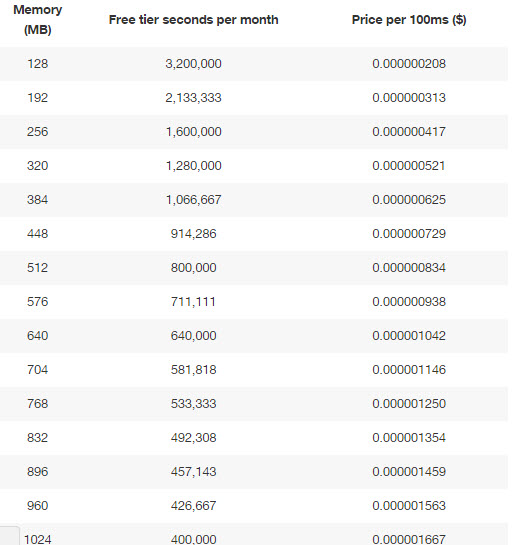

If you allocated 512MB of memory to your function, executed it 4 million times in one month, and it ran for 1 second each time, your charges would be calculated as follows: Monthly compute charges The monthly compute price is $0.00001667 per GB-s and the free tier provides 400,000 GB-s. Total compute (seconds) =4M * (1s) = 4,000,000 seconds Total compute (GB-s) = 4,000,000 * 512MB/1024 = 2,000,000 GB-s Total compute – Free tier compute = Monthly billable compute GB- s 2,000,000 GB-s – 400,000 free tier GB-s = 1,600,000 GB-s Monthly compute charges = 1,600,000 * $0.00001667 = $26.67 Monthly request charges The monthly request price is $0.20 per 1 million requests and the free tier provides 1M requests per month. Total requests – Free tier requests = Monthly billable requests 4M requests – 1M free tier requests = 3M Monthly billable requests Monthly request charges = 3M * $0.2/M = $0.60 Total monthly charges Total charges = Compute charges + Request charges = $26.67 + $0.60 = $27.27 per month https://aws.amazon.com/lambda/pricing/ Request :

First 1 million request are free Above 1 million request $.20 per 1 million request ($0.0000002 per request) Duration : Duration is calculated from the time your code beings executing until it returns or otherwise terminates. It rounded up to nearest 100ms. The price depends on the amount of memory you allocate to your function. You are charged $0.00001667 for every GB-second used. Free tier : The Lambda free tier includes 1M free requests per month and 400,000 GB-seconds of compute time per month. The Lambda free tier does not automatically expire at the end of your 12 month AWS Free Tier term, but is available to both existing and new AWS customers indefinitely.You are charged $0.00001667 for every GB-second used. (As per AWS) https://aws.amazon.com/lambda/pricing/ LAMBDA: In AWS Lambda runs the available code on high availability compute infrastructure (You just need to provide your code)

It does the following activities

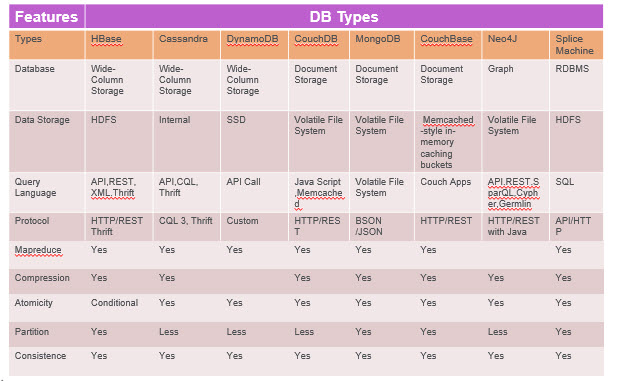

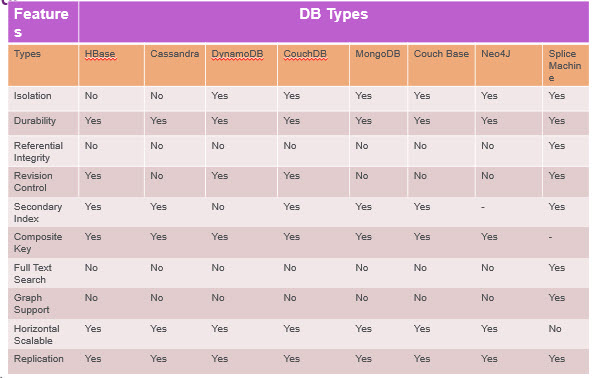

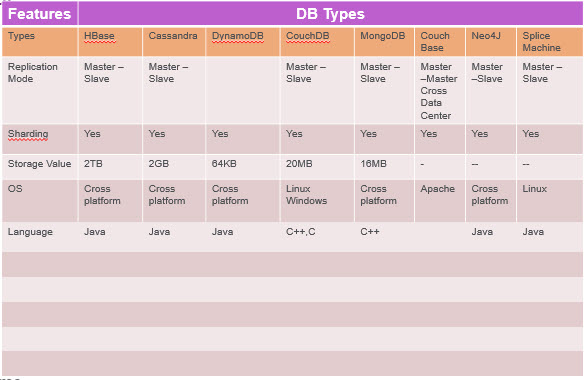

HBASE:

Main Characteristics

Cassandra : Key Characteristics:

Key Characteristics :

Suitable for :

Not Suitable for :

HBASE:

Cassandra:

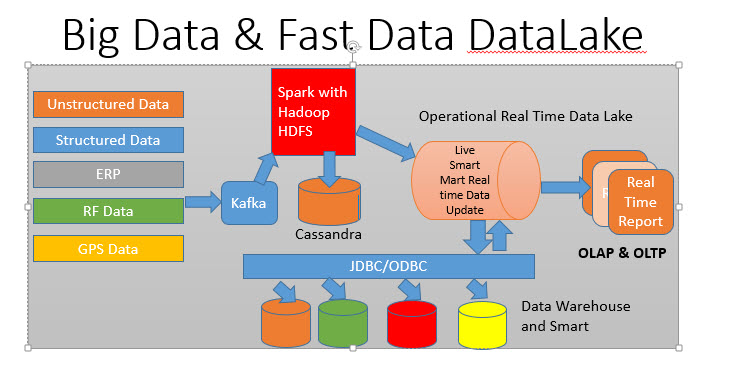

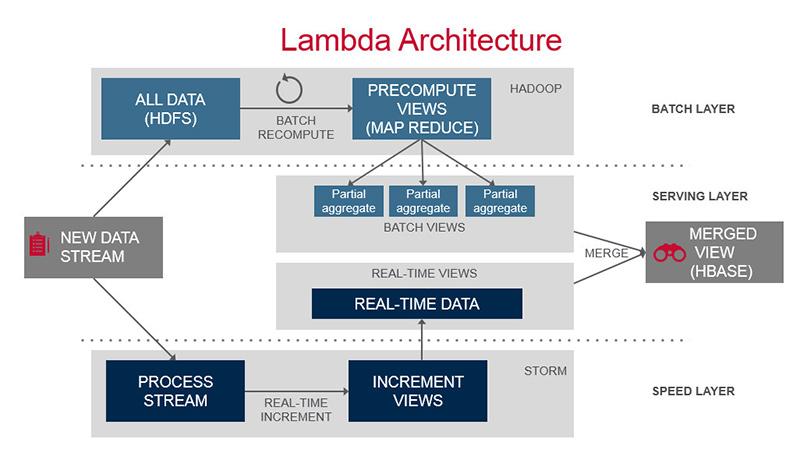

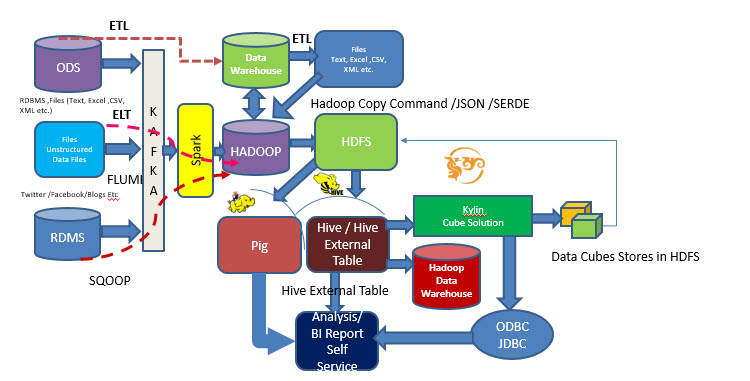

Lambda Architecture can be use in Batch, Real Time and Combining both .

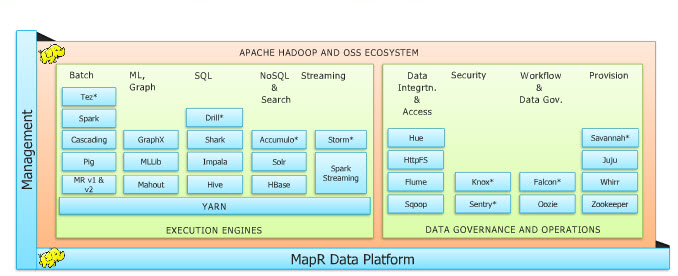

Works in three modes: 1.Batch Mode : Using Map Reduce :Provides analytics upto near real time semi aggregated 2 Real time : Stream Mode (Spark) : Provides real time analytics 3.Mix Mode: Integrating both Stream and Batch files :Mix mode serving layer may be data pull from NoSQL DB. Image reference from MapR Cloudera and Hortonworks: The SimilaritiesCloudera as well as Hortonworks are both built upon the same core of Apache Hadoop. As such, they have more similarities than differences.

Cloudera vs. Hortonworks: The DifferencesThat being said, the differences are the ones that play a deciding role of choosing one vendor over the other. Broadly, Cloudera and Hortonworks differ in the following aspects:

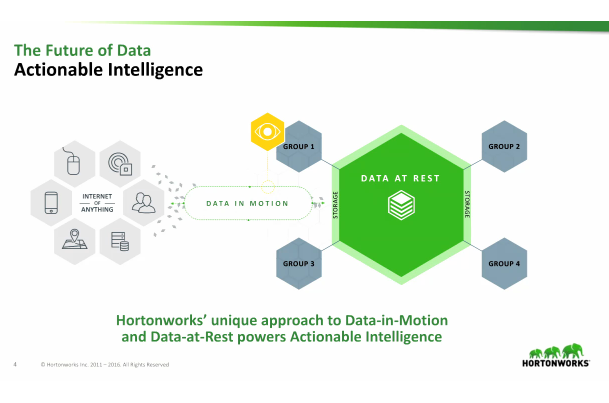

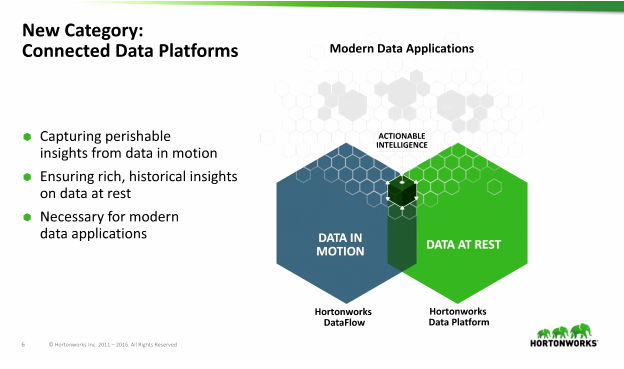

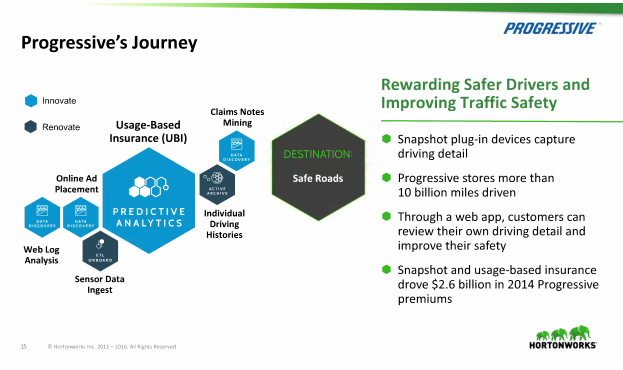

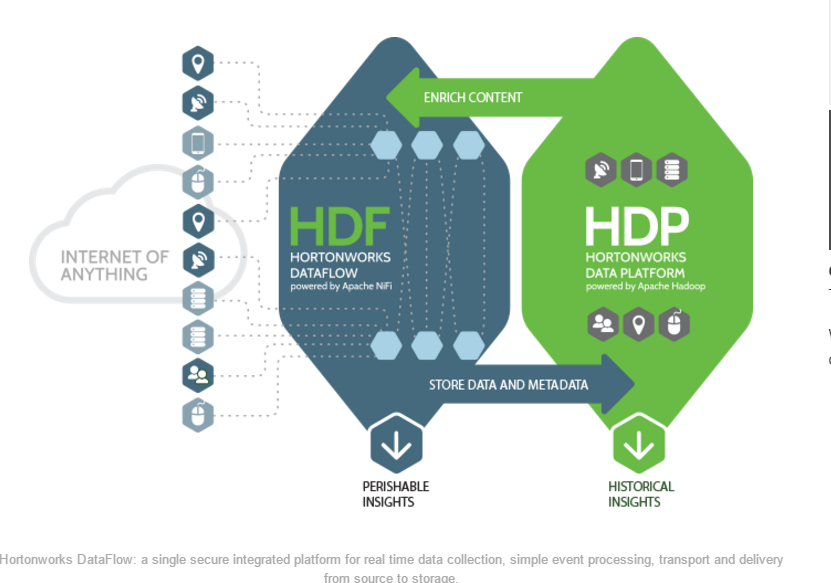

* May contain third party collection information **Information collected from best practices articles So far most of the analytic data are available based on the rest data. But analytic will be more accurate if it associate with the Data In Motion (Live Data) .This could be possible with the HORTON Data platform this platform will provide the Actionable analytic using the Live data (Data in motion) and Rest data. Actionable Intelligence: Using the IOT data how the data get processed. Hortonworks DataFlow for Data In Motion & Hotonworks Data Platform for Data At Rest create actionable intelligence

Hortonworks DataFlow (HDF), powered by Apache NiFi, is the first integrated platform that solves the real time complexity and challenges of collecting and transporting data from a multitude of sources be they big or small, fast or slow, always connected or intermittently available.An ideal solution for the Internet of Any Thing (IoAT), HDF enables simple, fast data acquisition, secure data transport, prioritized data flow and clear traceability of data from the very edge of your network all the way to the core data center.

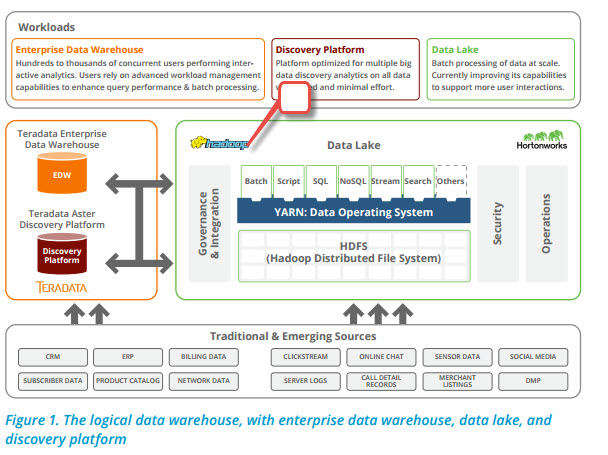

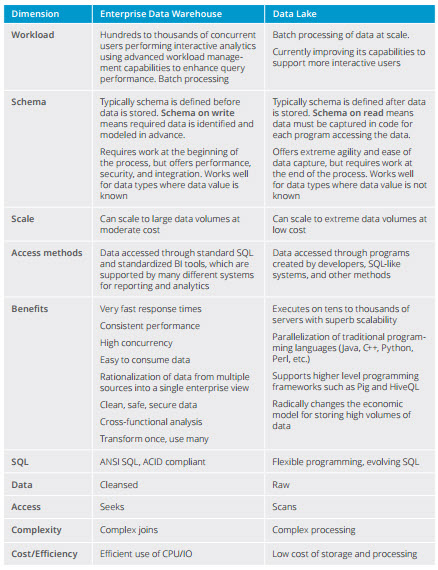

Data warehouse stores data in files or folders, a data lake uses a flat architecture to store data. Each data element in a lake is assigned a unique identifier and tagged with a set of extended metadata tags. When a business question arises, the data lake can be queried for relevant data, and that smaller set of data can then be analyzed to help answer the question.

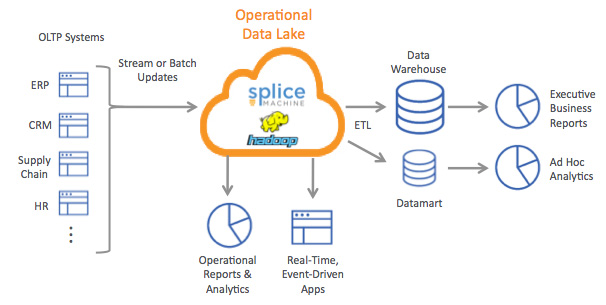

Splice Machine

Best Suited : Splice machine can we use top of the Hadoop with HBASE . This will give all the feature of the RDBMS and OLAP and OLTP reporting could be done easily . Processing time is much faster than traditional NoSql DB. Example : Real time data processing , OLTP and OLAP reporting HBASE Best Used: Data storage is huge and Map reduce used for the processing Example : Search Engine , Analysis huge data Cassandra Best Used : Huge Data storage , Real time process analysis with Spark Example : Real time log or feed analysis DynamoDB Best Used : Fast responses and Heavy query Used: Best for scalable , latency control and first query CouchDB Best Used : For collection, occasionally changing data, on which pre-defined queries are to be run. Best for versioning Example : CRM , CMS MongoDB Best Used: Best for dynamic queries. best for define indexes, without the Map/Reduced. Best for big DB. Example : For bulk storage Couch Base Best Used : Application with low-latency data access, high availability and high concurrence Example : Web Applications with high concurrency, games neo4j Best Used : For graph, interconnected data Example :Search routes , network topology and maps etc Data Lake : A data lake is a storage repository that holds a vast amount of raw data in its native format until it is needed. A traditional hierarchical data warehouse stores data in files and folders ,a data lake use a flat architecture to store the data.When a data element store in the data lake its assigned with a unique identifier and it's information stores in a meta data .Theses information can be easily queried for respective requirement. Basically the ground infrastructure is used for data storage is HADOOP technologies. Usually data load takes place from different sources to the Data Lake takes place using ELT tools (Scoop, Command Line , Scripts , Talend , Pentaho etc..) .Here the data load process takes very fast as the load takes place parallel and no schema check happens while loading data only schema check happens while read. Data lake is a marketing term and uses to large set of data  Splice Machine Example : Using Splice Machine as a Operational DB (Photo Courtesy Splice Machine ) Hive 0.14 allow CRUD (Create -Read-Update -Delete )operation.

INSERT INTO TABLE tablename [PARTITION (partcol1[=val1], partcol2[=val2] ...)] VALUES values_row [, values_row ...] UPDATE tablename SET column = value [, column = value ...] [WHERE expression] DELETE FROM tablename [WHERE expression] |

AUTHORAjit Dash 24+ Years’ experience in Data Analytics, Data Sc, Data Bases, Data warehouse,Business Analytics, Business Intelligence, Bigdata and Data Sc. etc..

Archives

December 2023

Categories |

RSS Feed

RSS Feed